Beyond the Frame: AI Weekly Digest #10

Mar 23, 2026

Welcome back to the Beyond Edge AI weekly digest — and this week the tools kept coming. Midjourney dropped V8 Alpha and the community couldn't decide whether to love it or hate it — we tested it ourselves and have thoughts. Higgsfield launched what might be the first real AI streaming platform, OpenArt introduced persistent 3D worlds you can actually walk around in, Runway previewed real-time video generation that sounds almost too fast to believe, and Google quietly dropped Stitch — a tool that might just make designers nervous. A big week. Let's get into it.

Midjourney V8 Alpha Release

Midjourney dropped V8 Alpha on March 17, and after putting it through its paces ourselves, the verdict is genuinely mixed — in the best and most interesting way. Prompt understanding has taken a real leap forward — V8 reads your intent more accurately and handles complex descriptions better than V7 ever did. But here's the flip side: getting the image you actually want is harder now. V7 was more predictable, more familiar. V8 requires more deliberate prompting, and if you're used to your go-to workflows, expect some friction while you recalibrate. Where V8 is clearly and unambiguously better is text rendering — that long-standing frustration is finally fixed, and readable, well-placed text in images is now genuinely reliable. Speed is another win — generations are significantly faster, and the new native HD mode means you don't need a separate upscaling step anymore, which changes the workflow in a meaningful way. The community reaction has been split down the middle, and honestly ours is too — the bones are exceptional and the direction is right, but V8 still feels like a model finding itself. Worth testing for sure, but not worth fully switching from V7 just yet. We ran a full comparison test ourselves — check it out on our Instagram to see exactly what we mean.

Higgsfield Original Series

Higgsfield just launched what they're calling the world's first complete AI streaming platform — Higgsfield Original Series — starting with Arena Zero, a fully AI-generated 10-minute sci-fi pilot produced by a team of 4 people in just 4 days. The ambition here is big. Alongside the debut episode, they've dropped 12 trailers across different genres — and viewers get to vote on which ones move into full production. But what makes this genuinely interesting for the AI filmmaking community isn't just the platform itself — it's the creator pathway behind it. Independent filmmakers can submit through Higgsfield contests, and the winners get grants, distribution, and monetization directly on the platform. The last action contest alone received over 8,700 submissions from 139 countries and distributed $500,000 to independent creators. Whether the quality of the content can hold up against human-produced streaming platforms is a separate conversation — but as a proof of concept that AI-native storytelling can reach a real audience at scale? Higgsfield just made the strongest case yet.

OpenArt Worlds

OpenArt just launched Worlds — a first-of-its-kind feature that gives you a persistent, fully navigable 3D environment built from a single text prompt or image, where you can walk around freely, position your camera at any angle, drop in characters and objects, and capture production-ready shots exactly the way you envisioned them. For AI filmmakers, the implications of this are significant. Scene consistency has always been one of the biggest frustrations in AI content creation — every new generation risks looking like a different place. With Worlds, every environment you build lives permanently in your OpenArt library and can be revisited and reused across any project, with scene consistency maintained across all posts, videos, and productions. You also get export in 3D Gaussian Splat format, meaning you can bring your AI-built worlds directly into Blender or Unreal Engine for further production work. The announcement hit 5.7 million views on X within days of launch — which tells you everything about how badly the community has been waiting for this kind of spatial control. Available now at openart.ai/suite/world.

Runway Real-Time Video Model on Vera Rubin

Runway just unveiled a research preview of a real-time video generation model trained on NVIDIA's Vera Rubin architecture — generating HD video with a time-to-first-frame of under 100 milliseconds. To put that in context: just a few months ago, generating a single AI video clip took seconds or minutes. Now we're talking about instant. Runway also announced that Gen-4.5, their top-rated video generation model, was ported from NVIDIA Hopper to Vera Rubin in a single day, demonstrating just how ready the new infrastructure is for production-level video workloads. The near-term implications for interactive media, live production, and real-time virtual environments are hard to overstate — this is what opens the door to things like real-time AI game worlds and live-streamed generative storytelling. The catch is that right now this runs on hardware most creators won't have access to for a while. But hardware limitations don't stay limitations for long, and Runway is clearly co-designing their models alongside the cutting edge of what's possible.

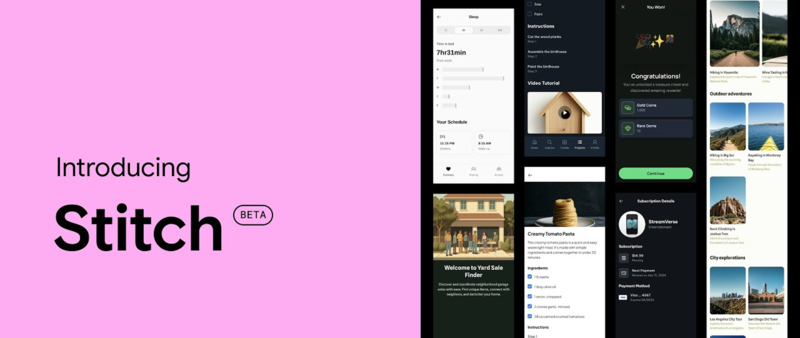

Stitch by Google

Google just dropped a major redesign of Stitch — their AI-native design tool — turning it into what they're calling a "vibe design" canvas where anyone can describe a UI in plain language, or even by speaking out loud, and get back a high-fidelity, production-ready interface design. The concept is essentially vibe coding applied to design: instead of learning Figma or drawing wireframes, you describe the feel and intent of what you want to build and the AI handles the layout, components, and front-end code. The update includes an infinite canvas, voice interaction, a design agent that reasons across your entire project history, and integrations with coding tools like Claude Code, Gemini CLI, and Cursor — Figma shares dropped 8% the day it launched. It's completely free, with 350 standard generations per month included. For the AI creative community, this is interesting not because it replaces Figma for serious design work — it doesn't, not yet — but because it's another step in the same direction as Luma Agents and vibe coding: the gap between having an idea and having a finished, deployable output keeps shrinking.

Want to go beyond weekly updates?

Our AI Filmmaking Course gives you a complete, practical workflow — from writing and design to directing and post-production. We keep the course updated as the tools evolve, so you always stay ahead.